Analog foundations

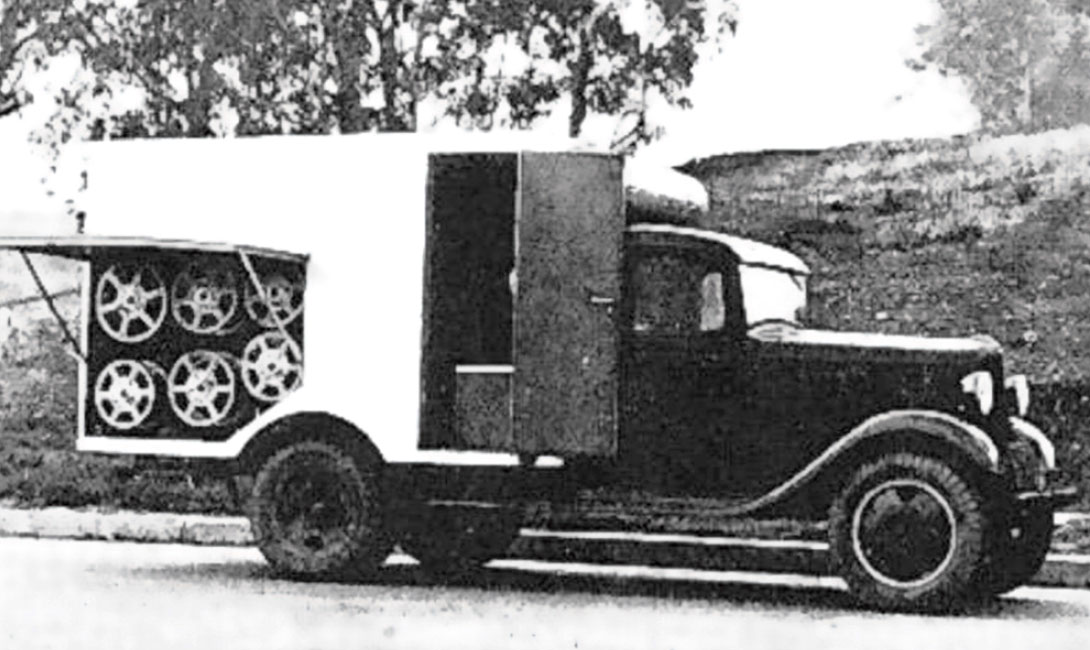

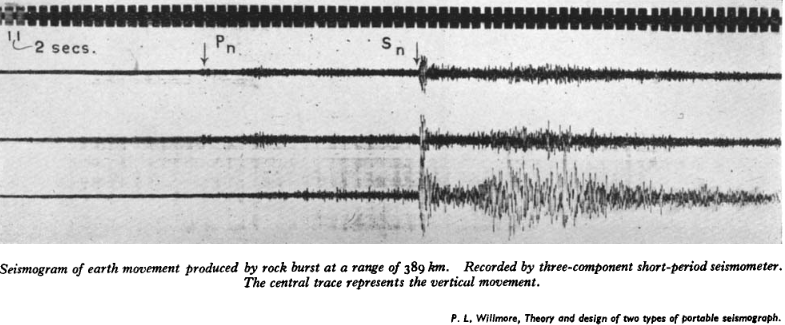

Reflection seismology became a practical exploration tool before digital computing existed. Recording and processing were largely analog, and interpretation depended heavily on manual workflows.

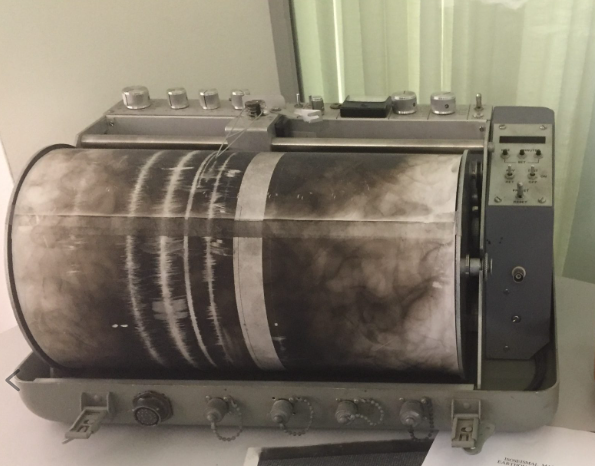

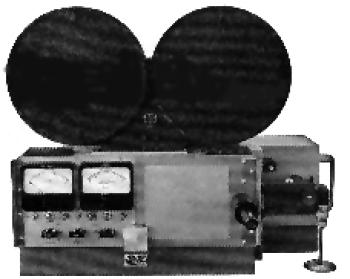

- Early signal chains used geophones, galvanometers, analog amplifiers, and photographic or drum-based recording media.

- Core algorithmic ideas such as stacking, velocity analysis, and migration concepts were already emerging.

- Common Depth Point stacking, Normal Moveout correction, and static corrections were understood conceptually, but processing remained slow and labor intensive.